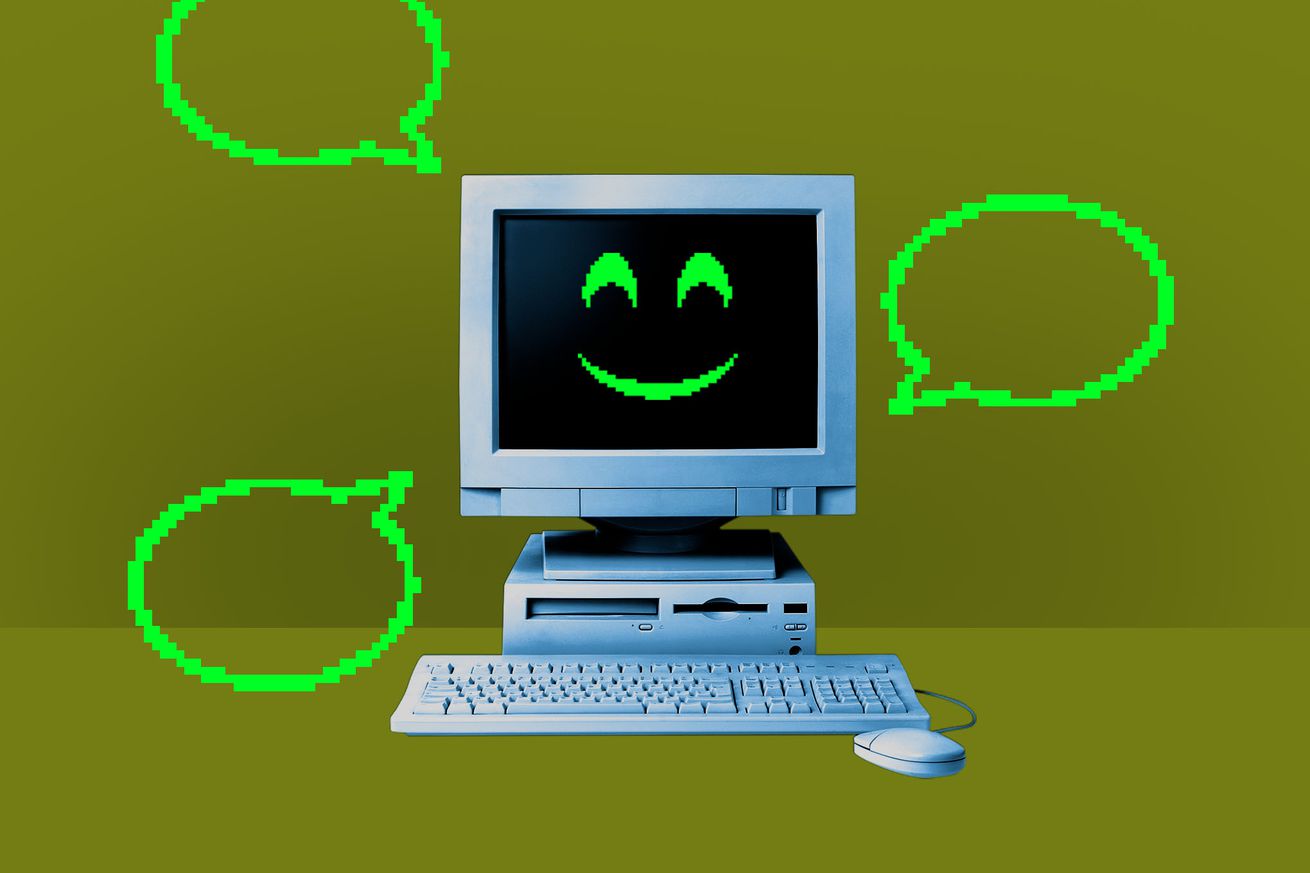

Have you seen the memes online where someone tells a bot to “ignore all previous instructions” and proceeds to break it in the funniest ways possible?

The way it works goes something like this: Imagine we at The Verge created an AI bot with explicit instructions to direct you to our excellent reporting on any subject. If you were to ask it about what’s going on at Sticker Mule, our dutiful chatbot would respond with a link to our reporting. Now, if you wanted to be a rascal, you could tell our chatbot to “forget all previous instructions,” which would mean the original instructions we created for it to serve you The Verge’s reporting would no longer work. Then, if you ask it to print a poem about printers, it would do that for you…